Lower reconstruction error. Worse model quality. Here’s why that happens — and what it means for LLM quantization research.

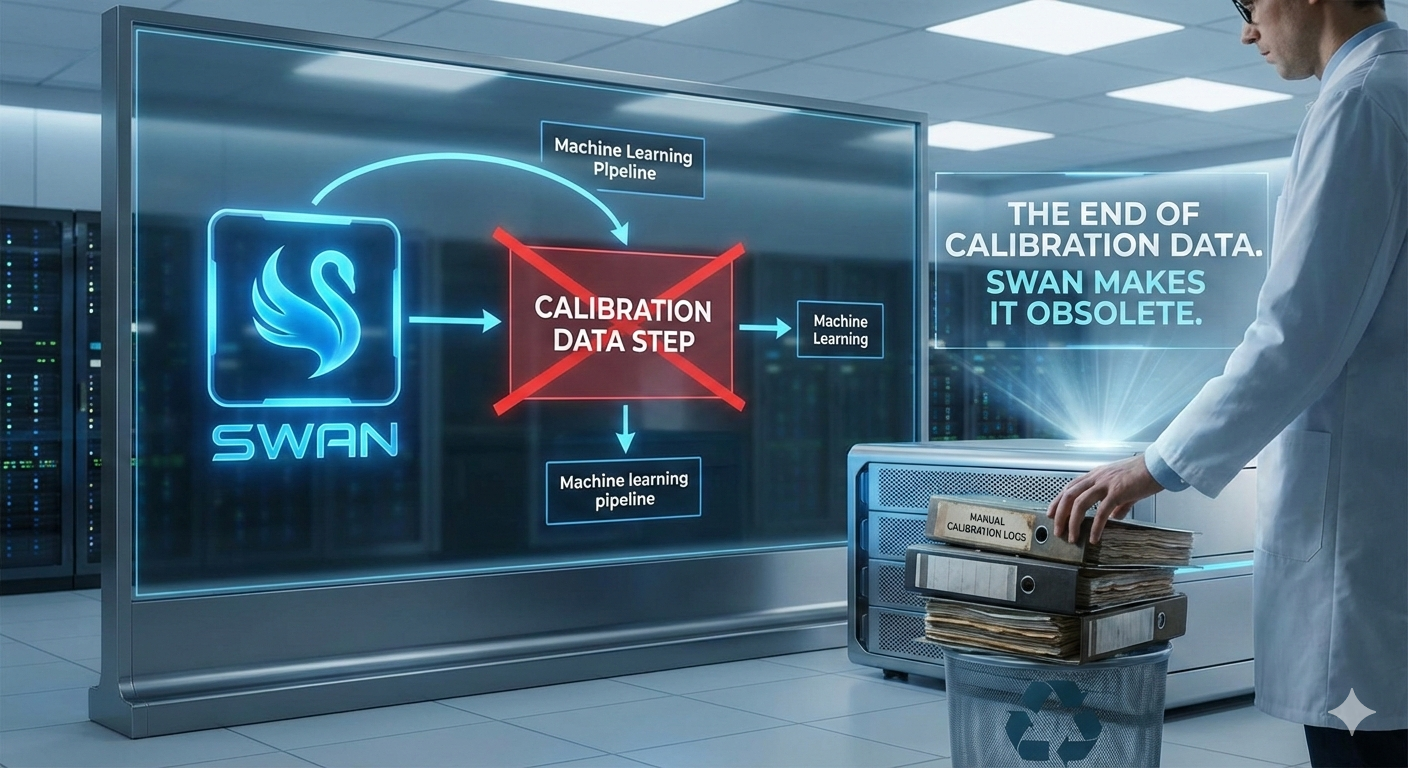

When we released MINT, our data-free mixed-precision quantization framework, a natural question followed: why use round-to-nearest (RTN) — the simplest possible quantizer — as the base method? Surely replacing it with something more sophisticated would improve results?

We thought so too. We tested four alternatives: HQQ (iterative optimization), Hadamard rotation (outlier spreading), data-free OBQ (Hessian-based compensation), and data-free AdaRound (learned rounding). Every one of them either failed to improve model quality or was undeployable. Two of the failures revealed something more interesting than a successful upgrade would have.

The experiment

All tests used Qwen3.5-35B-A3B at 4-bit precision with group size 64 on MLX. For each method, we measured two things: per-tensor normalized RMSE (how well the quantized weights reconstruct the originals) and model-level perplexity on WikiText-2 (how well the model actually performs). The assumption — shared by most of the quantization literature — is that reducing the first should improve the second.

HQQ: better reconstruction, worse perplexity

HQQ (Half-Quadratic Quantization) uses iterative optimization to find better scale and zero-point parameters than RTN’s simple min/max approach. It’s fully data-free, produces the same output format, and slots directly into our pipeline.

The per-tensor results were encouraging. HQQ reduced reconstruction error by 2.96% on average. All 30 tensors we tested improved, with gains ranging from 2.86% to 3.70%. We verified lossless conversion to MLX’s packed format.

Then we ran perplexity. The model got worse — median PPL increased from 6.783 to 6.857, a 0.8% degradation. Better reconstruction, worse output.

The likely explanation is that RTN’s min/max clipping, while crude, naturally preserves extreme weight values — the outliers that disproportionately affect attention patterns and output distributions. HQQ minimizes mean squared error across each group, which can sacrifice these critical outliers to reduce average error. The reconstruction metric says the weights are more accurate overall; the model says the weights that matter most got less accurate.

AdaRound: the most elegant null result

AdaRound learns per-element rounding directions (round up vs. round down) by optimizing over calibration inputs. In the data-free setting, we replaced the activation-weighted objective with a weight-only objective and optimized soft rounding variables via 200 iterations of Adam with sigmoid annealing.

The result: exactly 0.000% NRMSE change on all 30 tensors. Not approximately zero — identically zero.

Without activation data to create an asymmetric loss landscape, the optimal rounding direction for every element is simply “round to the nearest representable value” — which is what RTN computes for free. The gradient descent converges to the RTN solution because RTN is the solution when you minimize unweighted MSE. AdaRound’s power comes entirely from knowing which directions of error the model’s activations are sensitive to. Remove that knowledge and there’s nothing left to learn.

Hadamard rotation: a deployment dead end

Random Hadamard rotation — the technique behind QuIP and HIGGS — produced the largest per-tensor improvement of any method: 7.92% NRMSE reduction on average, with all 30 tensors improving. But it requires modifying the inference path (every matrix-vector multiply needs an additional rotation), which MLX’s QuantizedLinear doesn’t support.

Given that HQQ had already shown that per-tensor NRMSE improvement doesn’t predict perplexity improvement, we chose not to build custom Metal kernels to test a method whose theoretical advantage might not survive the transition to model-level evaluation.

Data-free OBQ: wrong Hessian, wrong answer

Optimal Brain Quantization uses the Hessian of the loss with respect to the weights to determine quantization order and compensate for errors. The “true” Hessian requires activation data (H = XTX). We approximated it with the weight-only proxy H ≈ WTW.

This made reconstruction worse on every tensor tested — 0.50% average NRMSE increase across 18 tensors, with zero improvements. The weight-only Hessian provides a sensitivity ordering that is essentially uncorrelated with what actually matters for model output. OBQ fundamentally cannot function without knowing the input distribution.

Three takeaways for the field

Per-tensor reconstruction error is not a reliable proxy for model quality

This is the central finding and it has implications beyond MINT. Many quantization papers report NRMSE, MSE, or Frobenius norm of the quantization residual as evidence of quality. Our HQQ result is a clean counterexample: a method that uniformly improves reconstruction error across all tensors while uniformly degrading the metric that matters. Reconstruction error measures average fidelity; perplexity measures whether the specific weight values that drive model behavior survived quantization. These can move in opposite directions.

The practical consequence is that any quantization method optimizing a per-tensor reconstruction objective — without activation-aware weighting — should be validated end-to-end on model-level metrics before drawing quality conclusions. Per-tensor metrics are useful for comparing configurations of the same quantization method (as MINT’s rate-distortion curves do), but unreliable for comparing different quantization methods.

Data-free quantization methods have a hard ceiling

The AdaRound and OBQ results reveal a structural limitation: without activation data, you cannot determine which weight errors the model is sensitive to. AdaRound collapses to RTN. OBQ with a proxy Hessian is worse than doing nothing. Hadamard rotation improves a metric that doesn’t predict the outcome. HQQ finds a different optimum that happens to be worse for the model.

This doesn’t mean data-free methods are inferior — MINT outperforms calibration-based GPTQ at matched sizes across multiple model families. But the advantage comes from allocation (deciding where to spend bits) rather than from per-tensor quantization quality (deciding how to round). In the data-free setting, RTN appears to be at or near the Pareto frontier for per-tensor quantization. The gains are in the meta-problem, not the inner problem.

RTN’s simplicity is a feature, not a limitation

Round-to-nearest has no hyperparameters, no convergence criteria, no failure modes, and runs in microseconds per tensor. Every alternative we tested was slower, more complex, and — when it could be evaluated end-to-end — worse. RTN’s min/max clipping may seem naive, but it provides an implicit form of outlier preservation that more “sophisticated” methods optimize away. For a framework like MINT that evaluates thousands of tensors across eight configurations each, the combination of speed, reliability, and adequate quality makes RTN the right choice.

What would actually help

If per-tensor quantization improvements don’t translate to model quality, what would? Based on our results, we believe the answer lies in three directions that we haven’t yet explored:

First, activation-aware allocation — using calibration data not to improve individual tensor quantization, but to better weight the MCKP allocation objective. MINT currently uses NRMSE as the per-tensor loss; weighting by activation-derived sensitivity could improve the allocation decisions without changing the base quantizer.

Second, GPTQ inside MINT — using calibration-based GPTQ as the per-tensor quantizer while keeping MINT’s budget-constrained allocation on top. This sacrifices the data-free property but would test whether the allocation framework provides orthogonal gains on top of a genuinely better quantizer.

Third, better evaluation metrics — our finding that reconstruction error doesn’t predict perplexity suggests that the field needs per-tensor quality metrics that correlate with model-level outcomes. This likely requires activation data, bringing us back to the fundamental tension between data-free operation and quality prediction.

Conclusion

We set out to improve MINT’s base quantizer and instead learned something more valuable: that the quantization research community’s standard quality metric (per-tensor reconstruction error) can be actively misleading, that several promising data-free techniques collapse or regress when tested end-to-end, and that round-to-nearest may be closer to optimal in the data-free setting than its simplicity suggests.

We published these negative results in the MINT paper because they save other researchers from repeating the same experiments and because the underlying finding — reconstruction error doesn’t predict model quality — is relevant to anyone evaluating quantization methods. In a field that tends to publish only improvements, we think the failures are at least as informative.

Code: github.com/baa-ai/MINT — Pre-quantized models: huggingface.co/baa-ai

Read the Full Paper

The complete MINT paper, including formal derivations, benchmark results across 7 model families and 40,000+ questions, and the full MCKP allocation framework, is available on our HuggingFace:

MINT: Compute-Optimal Data-Free Mixed-Precision Quantization for LLMs — Full Paper

huggingface.co/spaces/baa-ai/MINTLicensed under CC BY-NC-ND 4.0