A 400-billion parameter AI model. A single Mac Studio. 13 minutes of analysis. No GPU cluster required. RAM makes this possible, and it changes who can build with the world's most powerful AI models.

The Apple Silicon Advantage

Apple Silicon's unified memory architecture is quietly rewriting the rules of AI deployment. NVIDIA's most powerful data centre GPUs top out at 80 to 192 GB of memory per card. A Mac Studio with an M3 Ultra ships with up to 512 GB of unified memory, shared seamlessly between the CPU and an 80-core GPU.

That matters a lot for large language models. A 400-billion parameter model in BF16 needs over 800 GB of memory. No single GPU can hold it. Traditional deployments spread the model across 4 to 8 GPUs with complex tensor parallelism, inter-GPU communication overhead, and serious infrastructure cost.

But compress that model intelligently, keeping precision where it matters and reducing it where it doesn't, and it fits in a single Mac's unified memory. That's what RAM does.

What RAM Delivers for Apple AI

RAM is a proprietary compression technology built from the ground up for Apple Silicon. Here's what makes it a natural fit for the Apple ecosystem:

13 Minutes from Download to Deployment

RAM analyses a 400B+ parameter model and produces an optimised compression plan in under 13 minutes on a Mac Studio with an M3 Ultra. Compare that to calibration-based methods like GPTQ or AWQ, which need hours of processing with representative data you might not even have.

No calibration data. No GPU cluster. Peak memory stays well below total model size, making the whole process practical on a single Mac.

Native MLX Integration

RAM plugs directly into Apple's MLX framework. No custom kernels. No framework modifications. Just a clean integration with Apple's native AI toolkit.

The compressed model runs through mlx_lm like any other model, but with noticeably better quality thanks to RAM's intelligent compression decisions.

400B Parameters on a Single Machine

Here are the numbers that matter:

| Model | Parameters | RAM Size | Peak Memory | Fits On |

|---|---|---|---|---|

| Qwen3-8B | 8.2B | 6.8 GB | ~10 GB | Any M-series Mac |

| Llama4-Maverick | 401.6B | ~200 GB | ~240 GB | M3/M4 Ultra 512 GB |

| Qwen3.5-397B | 403.4B | 199 GB | ~240 GB | M3/M4 Ultra 512 GB |

A RAM-quantized Qwen3.5-397B fits entirely within 240 GB of peak memory on a single Mac Studio with 512 GB unified memory. No GPU cluster. No cloud infrastructure. No inter-node communication latency.

Quality That Doesn't Compromise

The natural worry with aggressive quantization is quality loss. RAM's results on Apple Silicon speak for themselves:

These scores come from a model running at just 4.31 average bits per parameter, compressed to less than a quarter of its original size. 96.0% on ARC-Challenge means near-perfect science reasoning from a model running on a desktop Mac.

In head-to-head perplexity comparison, RAM beats uniform 4-bit quantization (4.283 vs 4.298 PPL). Its proprietary compression technology identifies which parts of the model need protection, preserving quality where it counts while aggressively compressing the rest.

Why This Matters for the Apple AI Ecosystem

Putting Frontier Models on Everyone's Desk

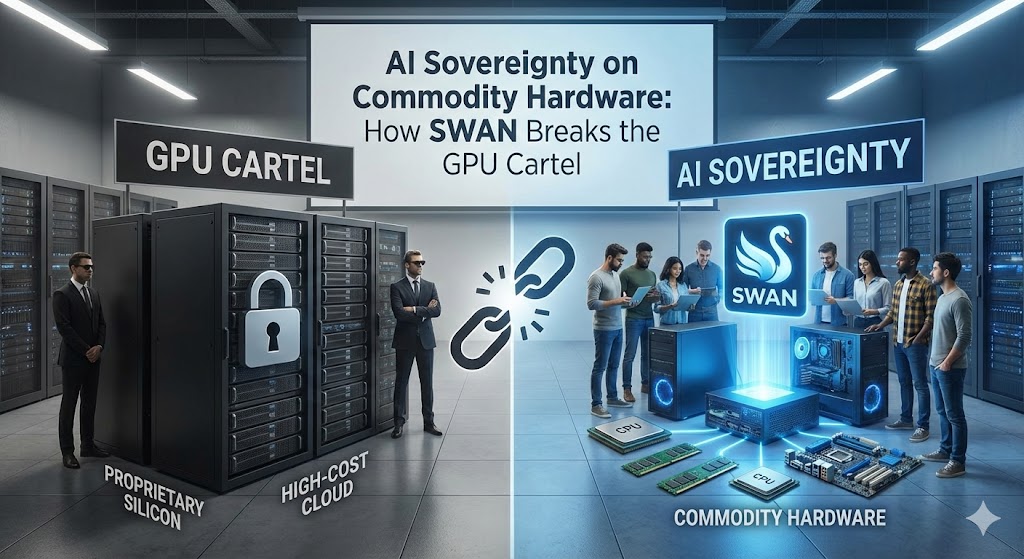

Until now, running 400B+ parameter models meant multi-GPU cloud instances at $10 to $50+ per hour. RAM on Apple Silicon puts these models on a machine that sits on your desk, runs silently, and costs a one-time hardware purchase. For researchers, indie developers, and small teams, this is a big deal.

Data Privacy by Default

Running locally means your data never leaves your machine. No API calls to cloud providers. No data residency concerns. No terms-of-service changes that could expose proprietary data. For regulated industries like healthcare, finance, and legal, this isn't optional; it's a requirement.

Zero Infrastructure Overhead

No Docker containers. No Kubernetes clusters. No GPU driver compatibility issues. No NCCL configuration for multi-GPU communication. The model loads through MLX and runs on the unified memory architecture Apple Silicon was designed around.

Offline-Capable AI

A RAM-quantized model on your Mac works without an internet connection. On a plane, in a secure facility, or during an outage, you still have a 400B parameter model with 96% science reasoning accuracy ready to go.

The RAM Advantage for MLX Developers

If you're building AI applications on Apple Silicon, RAM fits right into your existing workflow. Point it at a model, and within minutes you have a compressed version ready to serve through mlx_lm. No GPU cluster provisioning. No calibration data to hunt down. No hour-long compression runs.

What This Means for Apple's AI Future

Apple Silicon is already the most accessible high-memory platform for AI work. RAM removes the last major barrier: you no longer need specialised quantization infrastructure or calibration datasets to compress frontier models for this hardware.

As Apple keeps scaling unified memory and the MLX ecosystem matures, the combination of intelligent compression and Apple's hardware architecture creates a real alternative to traditional GPU-cluster deployment. For many use cases, it's not just competitive. It's better.

RAM is open source. Code and data at github.com/baa-ai/swan-quantization.

Read the Full Paper

The full RAM paper, including evaluation across four models and detailed deployment methodology, is available on our HuggingFace:

RAM: Proprietary Model Compression for Apple Silicon, Full Paper

huggingface.co/spaces/baa-ai/swan-paperLicensed under CC BY-NC-ND 4.0