Original

Research

Original research on SWAN quantization and sovereign AI deployment. Our work proves that frontier AI capability does not require permission from a hyperscaler, an allocation from NVIDIA, or a seven-figure cloud contract.

SWAN-Guided Knowledge Distillation: What If Your Student Model Was Born Deployment-Ready?

SAKD applies SWAN's data-free weight-geometry metrics and SAT's geometry regularisation to knowledge distillation, producing students that are simultaneously better knowledge-transfer targets and quantization-ready by construction.

Sensitivity-Aware Training: What If Models Never Needed Post-Training Quantization?

SAT extends the SWAN framework into the training loop itself, producing models that are quantization-ready by construction with 25% less training memory. The implications for training economics are staggering.

SWAN: Data-Free Mixed-Precision Quantization via Multi-Metric Sensitivity Analysis

Introducing SWAN — a data-free, per-tensor mixed-precision quantization method that uses four complementary sensitivity metrics to compress 400B+ parameter models in under 13 minutes.

SWAN on Apple Silicon: Running 400B Parameter Models on a Single Mac

How SWAN makes it possible to run the world's largest AI models on Apple Silicon Macs — 400B+ parameters on a single Mac Studio with 512 GB unified memory.

SWAN for Enterprise: Deploying Frontier AI Without the GPU Bill

How SWAN quantization eliminates calibration dependencies, reduces infrastructure costs, and enables private, on-premise deployment of 400B+ parameter AI models.

SWAN and the Humanoid Intelligence Problem

Humanoid robots need frontier-class reasoning in under 20 watts. SWAN’s mixed-precision quantization creates the compact, intelligent models that make embodied AI possible.

The Death of Uniform Quantization

We analysed 2,347 tensors and found sensitivity varies by 50×. Treating all parameters equally is a fundamental mistake — and the data proves it.

AI Sovereignty on Commodity Hardware: How SWAN Breaks the GPU Cartel

Access to frontier AI shouldn’t require an NVIDIA allocation or a hyperscaler contract. SWAN enables sovereign AI capability on hardware anyone can buy.

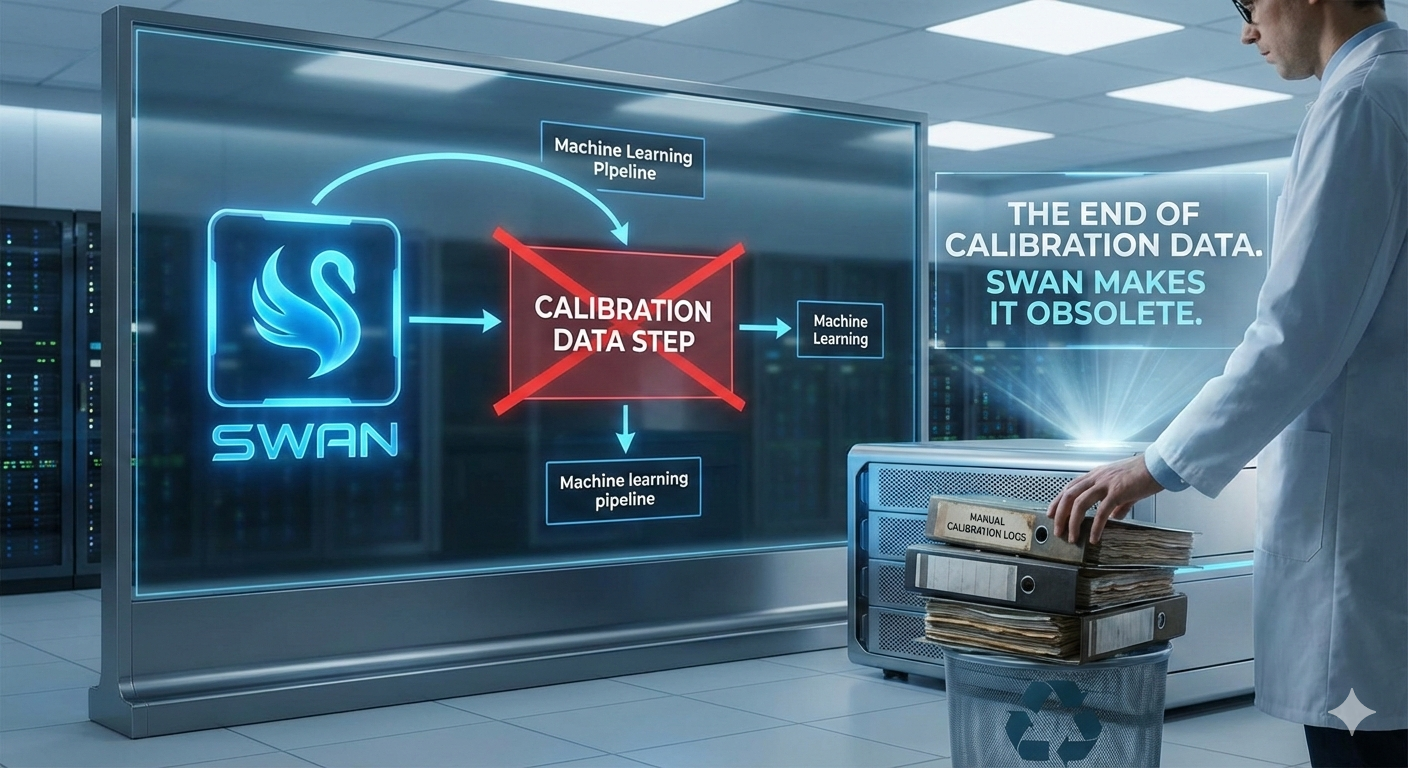

The End of Calibration Data

Every competitive quantization method requires calibration data. SWAN needs nothing but the weights themselves. An entire category of ML infrastructure just became obsolete.

Why Your 4-bit Model is Leaving Intelligence on the Table

Sensitivity varies by 50x across tensors. Uniform 4-bit simultaneously over-compresses critical weights and under-compresses insensitive ones. The numbers prove it.

AI Without Permission: Privacy, Sovereignty, and the Case for Local Inference

Every API call is a data disclosure. SWAN makes truly private, permissionless AI possible on hardware you own — no network, no logs, no content policies.

Profiling Expert Activation Patterns in 512-Expert MoE Models

How we profiled 30,720 experts across two large MoE models, what the activation patterns revealed, and why the numbers challenge common assumptions about expert redundancy.

Per-Expert Mixed-Bit Quantization via Mask-and-Combine Dispatch

We built a custom kernel that assigns different bit widths to individual experts in MoE models. It preserved model quality perfectly — and was too slow to use in production.

Expert Pruning in MoE Models — When Dead Experts Aren't Dead

We pruned 18% of experts from a 512-expert MoE model based on activation profiling. The model passed all automated quality tests. Then we looked at the actual responses.

MLX Quantization on Apple Silicon — Engineering Pitfalls and Workarounds

We quantized 400B+ parameter MoE models on a 512 GB Mac Studio using MLX. Along the way, we found a data corruption bug, a dtype footgun, and a GPU timeout trap.

Layer-Level vs Expert-Level Granularity in MoE Quantization

We compared three granularities of bit allocation for MoE quantization. The finest granularity was the slowest and barely the best.

Why Collapse Tests Are Insufficient for Quantization Quality Assessment

Three different quantization variants all scored 15/15 on automated quality tests. One couldn't translate a sentence into Spanish.