We benchmarked RAM across 7 model families, 5 benchmark suites, and over 40,000 questions. The results challenge fundamental assumptions that the quantization community has been operating under for years.

Some of these findings are about RAM specifically. Others are about the field. We've separated the genuinely surprising results , the ones that should change how people think about quantization , from the findings that are interesting but less unexpected.

All data comes from our complete benchmark results and the RAM paper.

The Genuinely Surprising Findings

1. Mean perplexity can give completely inverted quality rankings

The GLM-4.7-Flash result is genuinely alarming for the field. BF16 has mean PPL 11.5, which appears worse than RAM's 10.2 , suggesting quantization improves the model. That is obviously nonsensical. Median PPL gives the correct ranking: BF16 8.5, RAM 8.7.

The cause: five catastrophic outlier sequences where BF16 produces per-sequence perplexity values of 25,000–81,000 and quantization noise happens to stabilize them. This isn't a RAM-specific finding , it's a methodological warning for every quantization paper that reports only mean PPL.

The controlled noise experiment seals it: random Gaussian noise at matched magnitude produces the same median shift, proving the "regularization" is an artifact. If you have ever seen a paper claiming quantization improves perplexity, this is probably why.

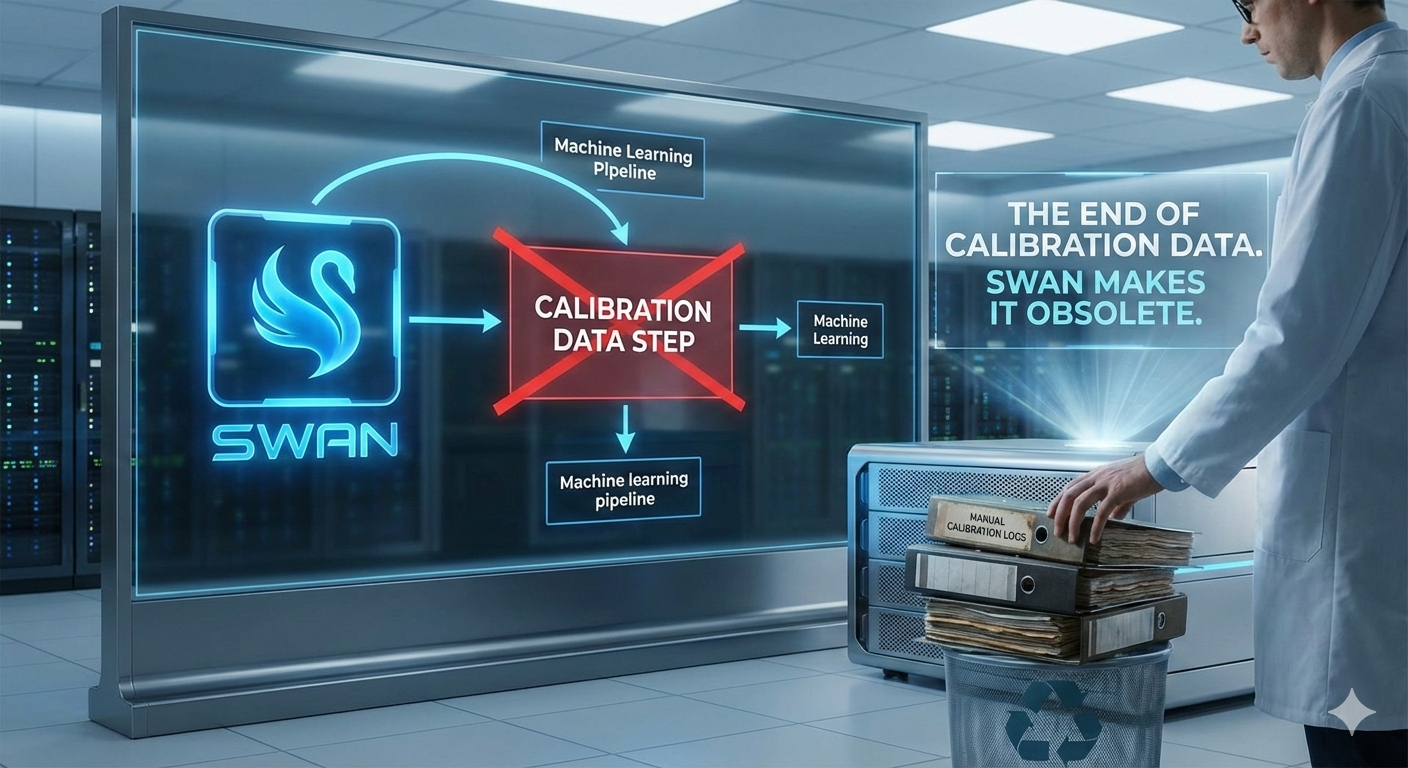

2. Data-free consistently beats calibration-based at matched sizes

This is genuinely counterintuitive. GPTQ has access to activation statistics from real data , it knows which weights matter for actual model behaviour. RAM has only the weights themselves. Yet RAM wins by 1–4.6% on median PPL across three different MoE architectures at exactly matched model sizes.

| Model | GPTQ PPL (med) | RAM PPL (med) | Delta |

|---|---|---|---|

| Qwen3-30B-A3B | 9.160 | 8.959 | −2.2% |

| Qwen2-57B-A14B | 6.396 | 6.335 | −1.0% |

| Mixtral-8x7B | 4.640 | 4.426 | −4.6% |

The explanation the paper offers , that joint group-size optimization, budget-constrained allocation, and better MoE expert coverage combine to outweigh the information advantage of calibration , is plausible but not fully proven.

What's clear: the conventional wisdom that "calibration-based is always better than data-free" is wrong, at least for current GPTQ defaults with fixed group sizes.

3. Downstream benchmarks are essentially useless for evaluating quantization quality

The raw numbers drive this home. On Qwen3-30B, PPL varies 18% from min-safe to 8-bit, while ARC-Challenge varies 2.7 percentage points. On Qwen3.5-35B, MMLU (14,015 questions) spans only 1.5 pp across a 2.5× size range. MMLU actually peaks at 37 GB and slightly declines at 51 GB even as PPL keeps improving.

This means most quantization papers that report ARC-C or MMLU improvements are measuring noise, not signal. The benchmarks saturate at roughly 4-bit precision. Above that, you literally can't tell the difference between 4-bit, 8-bit, and 16-bit using standard accuracy benchmarks. Only perplexity has the resolution to discriminate.

This is a finding that affects how the entire field should be evaluating quantization methods. If your evaluation suite consists of ARC-C and Winogrande, you're not measuring what you think you're measuring.

The Interesting But Less Surprising Findings

4. Infrastructure catches up to research

Our MLX kernel benchmarks reveal something you would never see in an academic paper. MLX had a real performance regression for group size 32 in version 0.29 , a 1.8–2.2× prefill penalty , that was fully fixed by version 0.31 through upstream PRs #1861 and #2031.

| Scenario | MLX 0.29.3 | MLX 0.31.1 |

|---|---|---|

| Prefill g32/g128 | 1.8–2.2× penalty | 1.00× (fixed) |

| Generation g32/g128 | 1.07–1.14× | 1.01–1.10× |

| Prefill g64/g128 | ~1.3× | 1.00× (fixed) |

This is practically important because RAM's preference for g32 would have been impractical six months ago. It's a nice example of research and infrastructure co-evolving , and the kind of detail that matters for real deployment but never appears in a conference paper.

5. Budget-constrained allocation is surprisingly efficient at the extremes

On Llama-4-Scout (109B), the 3.5× size difference between min-safe (47 GB) and the 192 GB model produces only a 1.9× PPL difference (8.675 vs 7.359). This means someone running on a 48 GB Mac sacrifices surprisingly little quality compared to someone with 192 GB.

The shape of the quality-vs-size curve is convex in a way that favours constrained deployments. The first bytes of budget above the safety floor produce massive returns , the first 1 GB above the 4-bit floor buys a 6.8% PPL improvement on Qwen3-30B. After that, the curve flattens fast.

The practical takeaway: if you're agonising over whether your hardware is "good enough" for a given model, it probably is. The penalty for being memory-constrained is far smaller than most people assume, provided you use budget-aware allocation instead of uniform quantization.

What This Means

Several of these findings are not specific to RAM. The mean-vs-median perplexity problem affects every quantization evaluation. The saturation of downstream benchmarks means the field needs better evaluation methodology.

What is specific to RAM is having the framework that made these discoveries possible. When you give an optimizer the freedom to jointly select bit-widths and group sizes under a budget constraint, the answers it produces are not what human intuition would suggest. The optimizer sees the global tradeoff that heuristics miss.

The full benchmark data is available in our complete results article. Every number is reproducible using the code at github.com/baa-ai/RAM.

Data from the RAM paper and benchmark evaluation suite. All experiments on Apple M2 Ultra (192 GB). Perplexity: WikiText-2 test split, seq_len=2048, seed 42. Downstream benchmarks: lm-evaluation-harness via MLX backend , ARC-Challenge (25-shot), Winogrande (5-shot), HellaSwag (10-shot), MMLU (5-shot, 14,015 questions). Full paper: huggingface.co/spaces/baa-ai/RAM. Code: github.com/baa-ai/RAM.

Read the Full Paper

The complete RAM paper, including formal derivations, benchmark results across 7 model families and 40,000+ questions, and the full optimal allocation framework, is available on our HuggingFace:

RAM: Compute-Optimal Proprietary Compression for LLMs , Full Paper

huggingface.co/spaces/baa-ai/RAMLicensed under CC BY-NC-ND 4.0