MLX Quantization on Apple Silicon: How MINT Turns a Mac into a Model Compression Lab

Every MINT result was produced on a single M2 Ultra via MLX. No GPUs, no cloud, no calibration data. From 109B parameters on a Mac Studio to 30B MoE on an M4 Pro.

Why You Can't Prune MoE Experts — Even the Ones Nobody Uses

Removing just 5% of least-used experts from a 256-expert MoE model causes 13x perplexity blow-up. Rarely activated does not mean safely removable.

DynaMINT: When MINT Meets Expert-Aware Tiering

Combining MINT's optimal bit search with activation-guided expert tiering preserves quality at +0.5% PPL while enabling per-expert precision differentiation.

What 100 Prompts Reveal About Expert Routing in 256-Expert MoE Models

Profiling expert activation across 100 diverse prompts reveals moderate routing concentration, domain-dependent usage, and a dramatic sample-size effect on apparent redundancy.

The Compression Variable Everyone Ignored

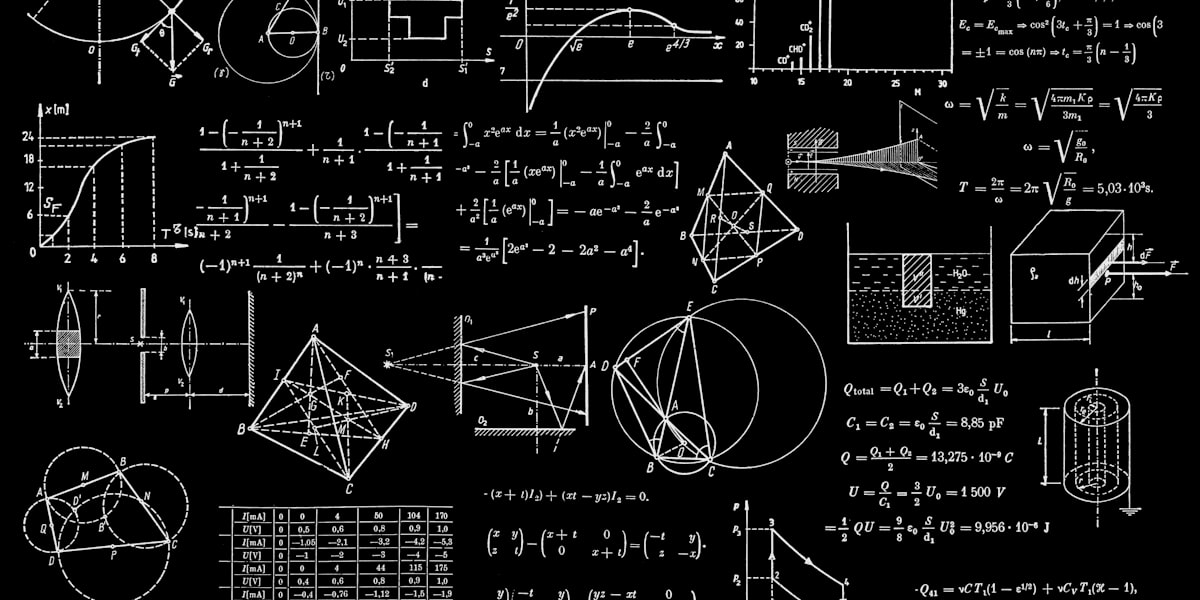

85% of tensors want group size 32, not 128. Per-tensor group-size selection provides larger quality gains than bit-width changes — and why MLX’s implementation makes it all possible.

When Better Is Worse: What We Learned by Trying to Improve Our Quantizer

Lower reconstruction error. Worse model quality. We tested four alternatives to RTN and discovered that per-tensor reconstruction error is not a reliable proxy for model quality.

Eight Things Our Benchmarks Reveal That Nobody Expected

Group size matters more than bit-width. Mean perplexity lies. Calibration loses to data-free. Downstream benchmarks are useless for quantization. And five more findings that challenge the field.

MINT Benchmark Results: 7 Models, 40,000+ Questions, One Winner

Complete evaluation across 7 model families from 8B to 109B. Perplexity, ARC-Challenge, Winogrande, HellaSwag, MMLU, throughput, and GPTQ head-to-head — MINT wins on every model tested.

Mean Perplexity Is Lying to You

Standard perplexity evaluation produces misleading quality orderings. On GLM-4.7-Flash, mean PPL ranks BF16 as worst — the exact opposite of reality. Five outlier sequences flip the entire leaderboard.

Does Quantization Actually Regularize? We Tested It.

Quantized models sometimes beat full precision on perplexity. We injected matched-scale Gaussian noise to find out: it’s a distributional artifact, not regularization.

Beyond Perplexity: Downstream Benchmarks Confirm MINT Beats All Quantization Strategies

ARC-Challenge and Winogrande benchmarks confirm that MINT’s mixed-precision allocation preserves or improves real task accuracy at matched model sizes.

Soft Priors vs Hard Rules: Why MINT Doesn’t Hard-Code Protection

Every quantization framework hard-codes which tensors to protect. MINT replaces binary rules with soft multiplicative priors that let the optimizer decide — and the results are surprising.

Beyond NRMSE: The Sensitivity Features MINT Computes But Doesn’t Use (Yet)

Spectral features, per-group kurtosis, and norm-guided output noise amplification — computed for every tensor but not yet used by the allocator. Here’s what they reveal.

MINT: Compute-Optimal Data-Free Mixed-Precision Quantization for LLMs

The full MINT research paper. Budget-targeted quantization via rate-distortion optimization, validated across dense and MoE architectures from 8B to 109B parameters. Outperforms GPTQ without calibration data.

One Number That Prevents Catastrophic Quantization

A natural 2 dB gap in SQNR separates catastrophic from usable compression. 9 dB is the universal safety floor — validated across 8B to 109B parameters, dense and MoE.

When Data-Free Beats the Gold Standard

MINT outperforms GPTQ at matched model sizes across three MoE families — without ever seeing a single calibration sample. The calibration paradigm has a problem.

How to Fit Massive Models onto Tiny Memory Footprints without Losing Accuracy

Specify ‘24 GB for RTX 4090’ and receive the provably optimal quantization. MINT solves the Multiple-Choice Knapsack Problem for model compression — in under a second.

The 16-Bit Allocation Mistake

SWAN v1 kept 5.6% of parameters at 16-bit. MINT proves those tensors are better served by 4-bit with group size 32 — comparable quality at 25% the storage cost.

Eight Measurements per Tensor: The End of Single-Point Sensitivity

Single-point sensitivity metrics create circular reasoning. Multi-point rate-distortion curves capture the full error surface and enable provably optimal quantization allocations.

How to Quantize a Model with 256 Experts

Individual expert analysis for small MoE models, k-means clustering for large ones, and conservative aggregation that ensures no expert is the weak link.

The GPU Hours Nobody Needed to Spend

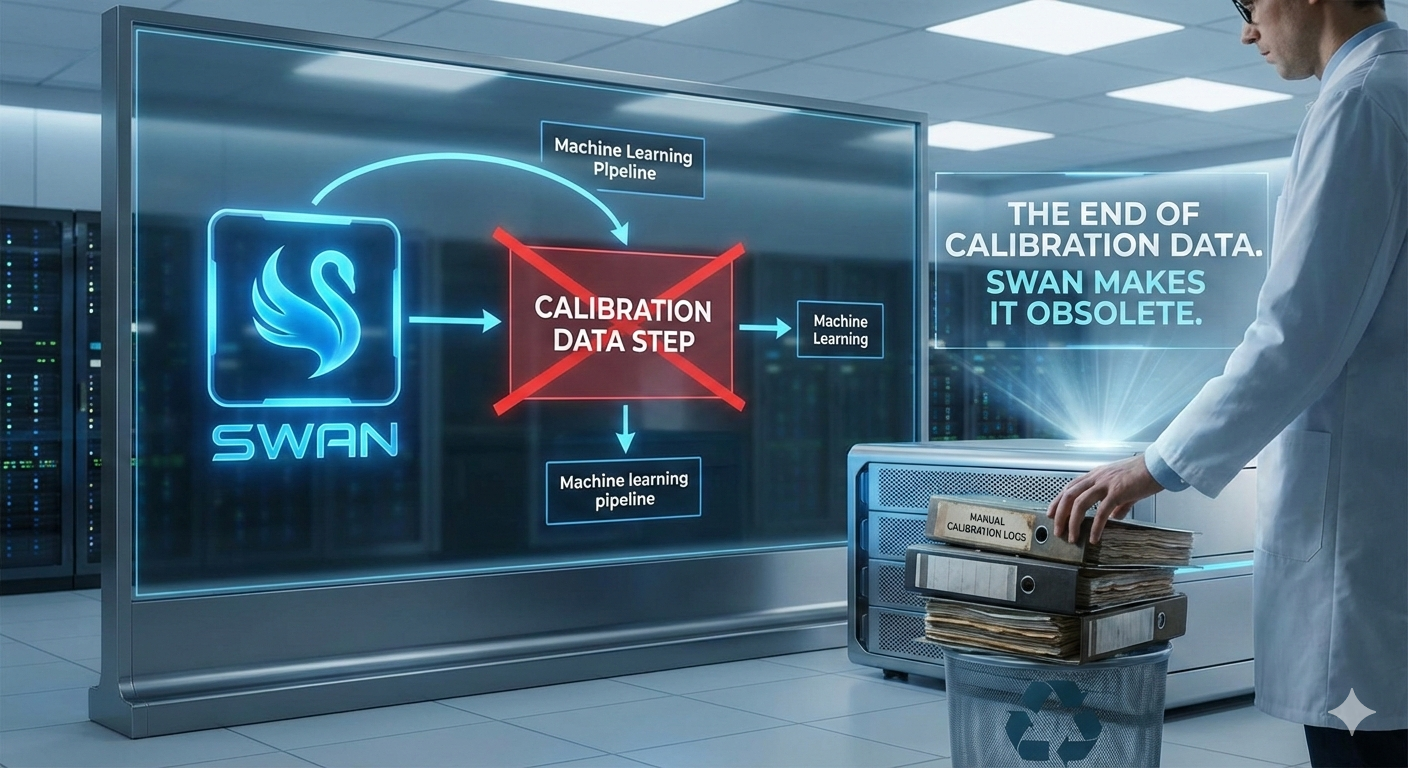

How eliminating calibration from model quantization could save the AI industry millions of GPU-hours, tens of millions of dollars, and enough electricity to power a small city.

The Quantization Bottleneck Is About to Break

Why data-free, budget-aware model compression could reshape how the industry deploys large language models. Calibration elimination, budget-targeted allocation, group-size optimization, and safety thresholds.

What SWAN Actually Delivers: Evidence from Four Models and 20,000 Tensors

The complete value proposition grounded in empirical evidence. Data-free compression, MoE-optimised allocation, model diagnostics, evaluation methodology, and pipeline automation — tested across dense and MoE architectures.

SWAN Evaluation Results: Four Models, Three Architectures, One Framework

Complete evaluation across Qwen3-8B, Qwen3-30B-A3B, GLM-4.7-Flash and GLM-4.7. Perplexity, academic benchmarks, bit allocation analysis, and compression efficiency — all the numbers.

Why SWAN Matters: Data-Free Quantization and the Future of Model Deployment

The real significance isn’t the benchmark numbers — it’s what becomes possible when quantization is instant, data-free, and automatic. From CI/CD pipelines to privacy compliance.

When Quantization Beats Full Precision: Anatomy of a Perplexity Anomaly

We quantized GLM-4.7-Flash to 4.4 average bits and measured 12.5% lower perplexity than the BF16 baseline. Here's why that's not the breakthrough it appears to be.

SWAN-Guided Knowledge Distillation: What If Your Student Model Was Born Deployment-Ready?

SAKD applies SWAN's data-free weight-geometry metrics and SAT's geometry regularisation to knowledge distillation, producing students that are simultaneously better knowledge-transfer targets and quantization-ready by construction.

Sensitivity-Aware Training: What If Models Never Needed Post-Training Quantization?

SAT extends the SWAN framework into the training loop itself, producing models that are quantization-ready by construction with 25% less training memory. The implications for training economics are staggering.

SWAN: Data-Free Mixed-Precision Quantization via Multi-Metric Sensitivity Analysis

Introducing SWAN — a data-free, per-tensor mixed-precision quantization method that uses four complementary sensitivity metrics to compress 400B+ parameter models in under 13 minutes.

SWAN on Apple Silicon: Running 400B Parameter Models on a Single Mac

How SWAN makes it possible to run the world's largest AI models on Apple Silicon Macs — 400B+ parameters on a single Mac Studio with 512 GB unified memory.

SWAN for Enterprise: Deploying Frontier AI Without the GPU Bill

How SWAN quantization eliminates calibration dependencies, reduces infrastructure costs, and enables private, on-premise deployment of 400B+ parameter AI models.

SWAN and the Humanoid Intelligence Problem

Humanoid robots need frontier-class reasoning in under 20 watts. SWAN’s mixed-precision quantization creates the compact, intelligent models that make embodied AI possible.

The Death of Uniform Quantization

We analysed 2,347 tensors and found sensitivity varies by 50×. Treating all parameters equally is a fundamental mistake — and the data proves it.

AI Sovereignty on Commodity Hardware: How SWAN Breaks the GPU Cartel

Access to frontier AI shouldn’t require an NVIDIA allocation or a hyperscaler contract. SWAN enables sovereign AI capability on hardware anyone can buy.

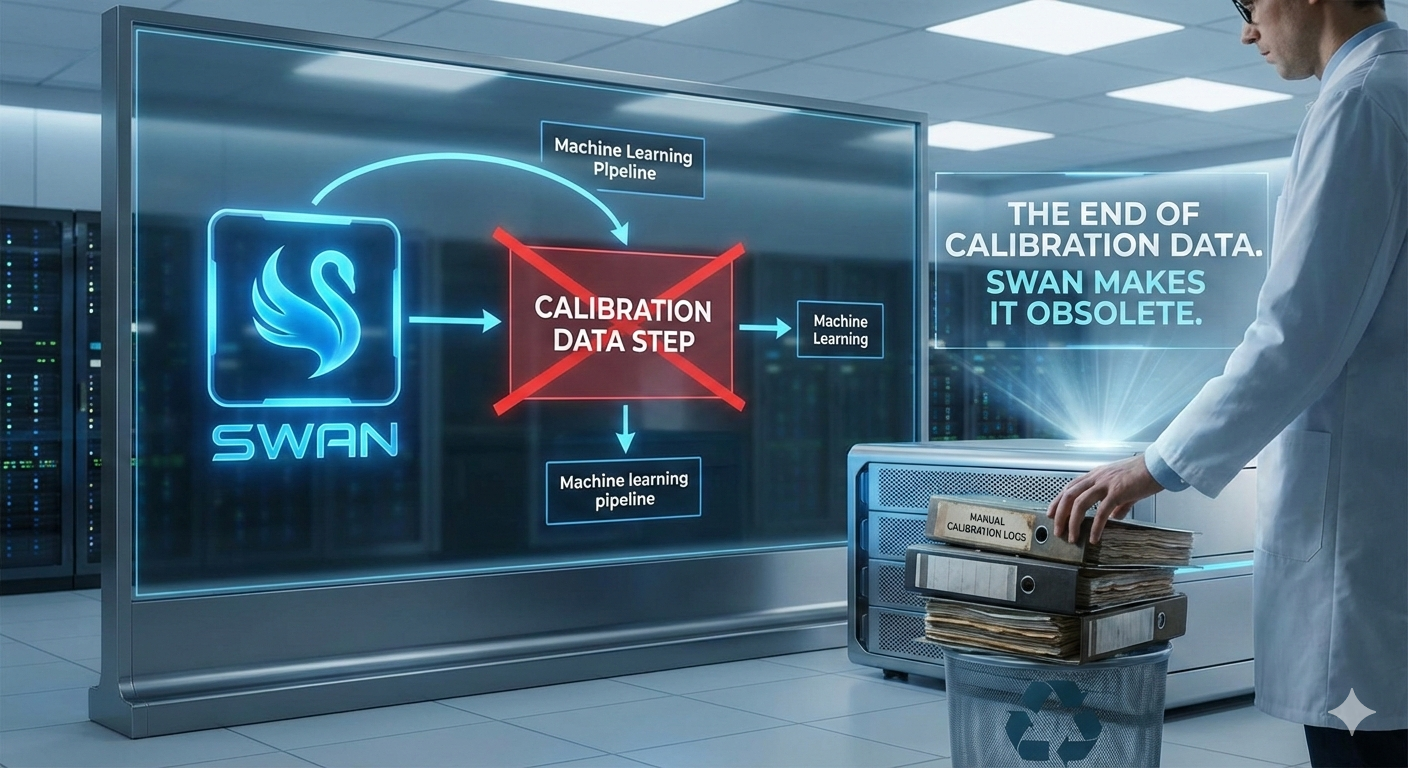

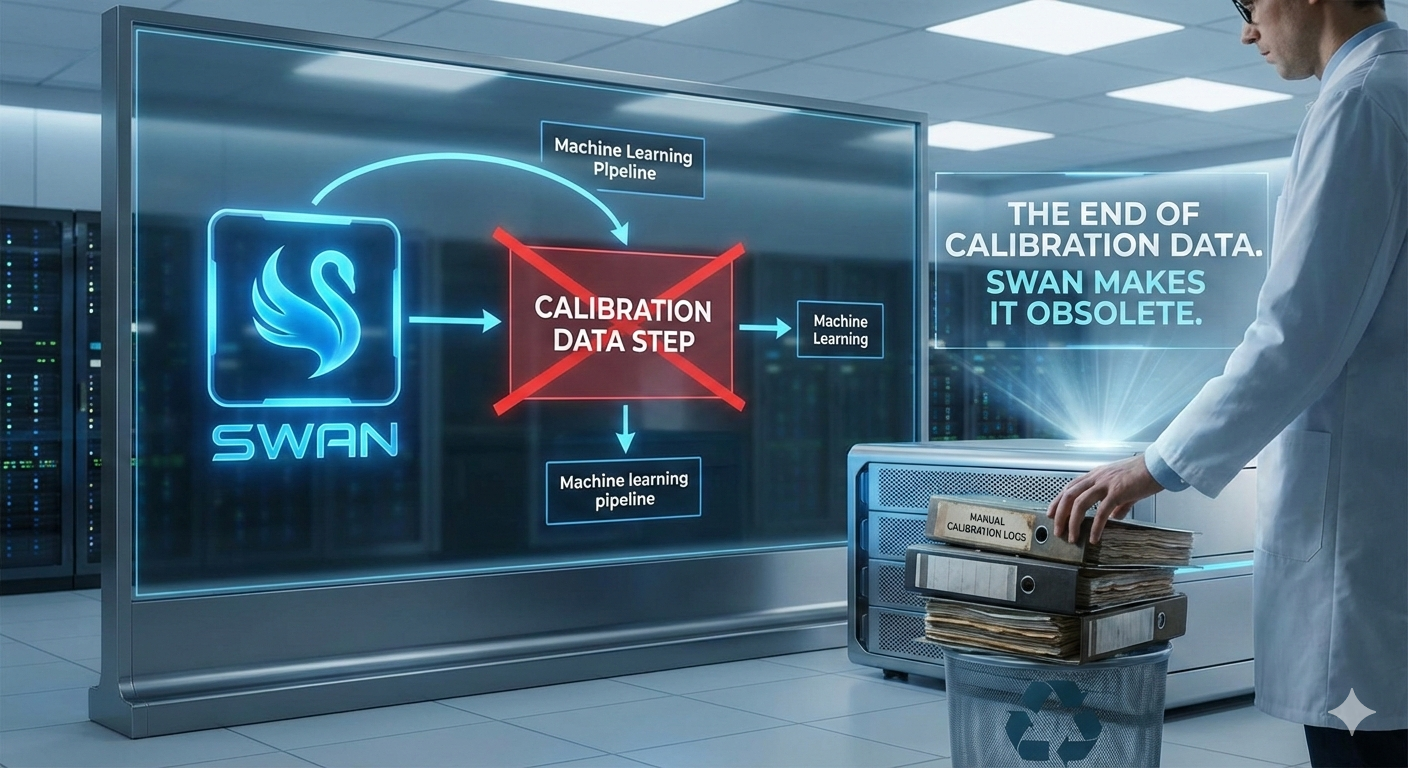

The End of Calibration Data

Every competitive quantization method requires calibration data. SWAN needs nothing but the weights themselves. An entire category of ML infrastructure just became obsolete.

Why Your 4-bit Model is Leaving Intelligence on the Table

Sensitivity varies by 50x across tensors. Uniform 4-bit simultaneously over-compresses critical weights and under-compresses insensitive ones. The numbers prove it.

AI Without Permission: Privacy, Sovereignty, and the Case for Local Inference

Every API call is a data disclosure. SWAN makes truly private, permissionless AI possible on hardware you own — no network, no logs, no content policies.

Profiling Expert Activation Patterns in 512-Expert MoE Models

How we profiled 30,720 experts across two large MoE models, what the activation patterns revealed, and why the numbers challenge common assumptions about expert redundancy.

Per-Expert Mixed-Bit Quantization via Mask-and-Combine Dispatch

We built a custom kernel that assigns different bit widths to individual experts in MoE models. It preserved model quality perfectly — and was too slow to use in production.

Expert Pruning in MoE Models — When Dead Experts Aren't Dead

We pruned 18% of experts from a 512-expert MoE model based on activation profiling. The model passed all automated quality tests. Then we looked at the actual responses.

MLX Quantization on Apple Silicon — Engineering Pitfalls and Workarounds

We quantized 400B+ parameter MoE models on a 512 GB Mac Studio using MLX. Along the way, we found a data corruption bug, a dtype footgun, and a GPU timeout trap.

Layer-Level vs Expert-Level Granularity in MoE Quantization

We compared three granularities of bit allocation for MoE quantization. The finest granularity was the slowest and barely the best.

Why Collapse Tests Are Insufficient for Quantization Quality Assessment

Three different quantization variants all scored 15/15 on automated quality tests. One couldn't translate a sentence into Spanish.