For over a decade, model quantization has required calibration data, representative samples that guide the compression process. RAM eliminates this requirement entirely. The implications go far beyond convenience: an entire category of ML infrastructure, data licensing, and pipeline complexity just disappears.

The Calibration Tax

Every major quantization method in production today, GPTQ, AWQ, SqueezeLLM, QuIP, OmniQuant, requires a calibration dataset. This isn't a minor implementation detail. It's a structural dependency that ripples through the entire model deployment pipeline.

Here's what calibration actually costs you:

The Hidden Cost Chain

This is the calibration tax: a mandatory overhead that every team pays, every time they quantize a model. It adds days to the deployment pipeline, creates legal exposure, requires GPU compute, and introduces non-determinism that makes debugging production issues harder.

What RAM Does Differently

RAM is entirely data-free. No forward passes, no activation statistics, no training data, no calibration samples. The compression process needs nothing beyond the model weights themselves.

This isn't a rough approximation. RAM-quantized Qwen3.5-397B achieves 4.283 perplexity versus 4.298 for uniform 4-bit with calibration. The data-free method doesn't just match calibrated quantization. It beats it.

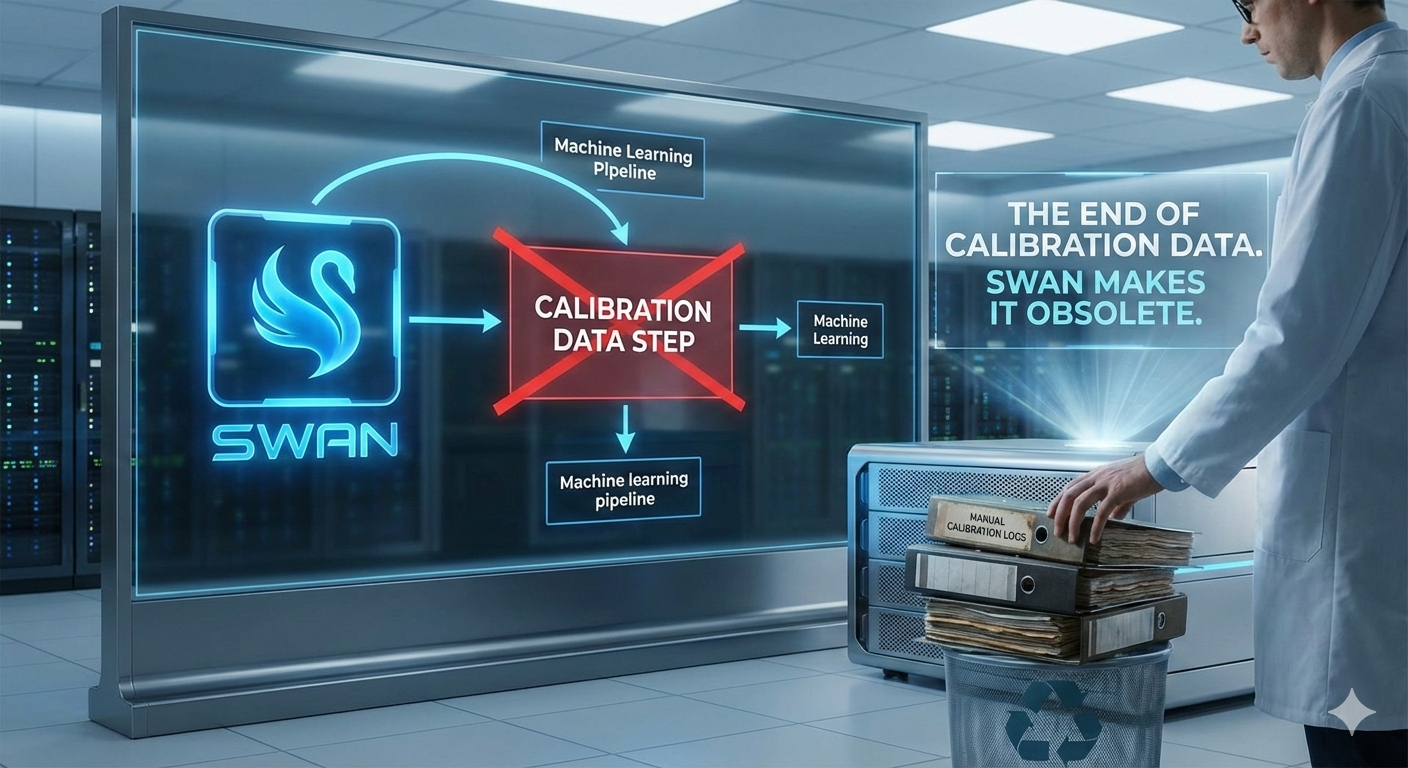

The Pipeline Before and After

Here's what a typical quantization pipeline looks like with calibration-dependent methods versus RAM:

Traditional (GPTQ/AWQ)

- Download model weights

- Source calibration dataset

- Verify data licensing

- Prepare & tokenize calibration data

- Load model on GPU cluster

- Run calibration forward passes

- Tune quantization hyperparameters

- Apply quantization

- Validate output quality

- Debug calibration-data-dependent artifacts

- Deploy

6 steps require calibration data · GPU cluster needed · Hours to days

RAM

- Download model weights

- Run RAM analysis (CPU)

- Apply quantization

- Validate output quality

- Deploy

0 steps require calibration data · No GPU needed for analysis · 13 minutes

Six steps eliminated. Not automated, eliminated. The infrastructure to store, licence, version, and process calibration datasets is no longer needed. The GPU compute for calibration passes is gone. The debugging of calibration-dependent quality issues is gone.

Why This Matters for Production

Deterministic reproducibility

Run RAM twice on the same model. You get identical results. Every time. No variance from calibration data sampling, no sensitivity to sequence length choices, no dependency on random seeds. This matters enormously for regulated environments where model behaviour must be reproducible and auditable.

Zero data governance burden

In healthcare, finance, and government, using data, any data, triggers governance processes. Even "public" calibration datasets like WikiText or C4 may have terms that conflict with your organisation's data policies. With RAM, there's no data to govern. The model's weights are the only input, and you already have a licence for those.

Instant model updates

When a new model version drops, Qwen4, Llama 5, whatever comes next, teams using calibration-dependent methods have to restart their entire quantization pipeline. Re-source appropriate calibration data (the new model may have different training characteristics). Re-run calibration passes. Re-validate.

With RAM: download new weights, run analysis, deploy. Thirteen minutes from download to production-ready quantization. When models ship monthly or faster, this speed difference is the difference between running the latest and always being one version behind.

Cross-domain portability

Calibration data is domain-specific. A model quantized with English text calibration may perform worse on code. A model calibrated on general text may degrade on medical terminology. RAM's data-free approach makes quantization domain-agnostic. It produces the same result regardless of your deployment domain, because there's no calibration sample to bias it.

The Inverse Scaling Advantage

Here's the most counterintuitive property of RAM: as models get larger, calibration-dependent methods get harder, while RAM gets proportionally easier.

Scaling Dynamics

Calibration-based (GPTQ/AWQ)

- • 8B model: 1 GPU, ~30 minutes

- • 70B model: 4 GPUs, ~2 hours

- • 400B model: 8 GPUs, ~6+ hours

- • More parameters = more memory = more GPUs = more cost

RAM (data-free)

- • 8B model: CPU, ~2 minutes

- • 70B model: CPU, ~5 minutes

- • 400B model: CPU, ~13 minutes

- • Embarrassingly parallel across shards

The 400B model case is where this gets dramatic. Running GPTQ calibration on Qwen3.5-397B means loading the entire model into GPU memory for forward passes. That's at minimum 4–8 H100 GPUs costing $25,000+ each, running for hours. RAM compresses the same model on a single CPU in under 13 minutes.

As models grow to 1 trillion parameters and beyond (and they will), calibration-based methods will need increasingly expensive GPU clusters just for compression. RAM will keep running on commodity hardware.

What the Industry Should Be Asking

If data-free compression can match or beat calibration-based methods, why was the industry using calibration data in the first place?

The honest answer: because nobody had demonstrated a rigorous data-free alternative. RAM shows that calibration data simply isn't required to produce high-quality compressed models. Equal or better quality, zero data dependencies, and a fraction of the time.

The Broader Implication

Model compression is following the same arc as many technologies. There's an initial phase where external resources (calibration data, fine-tuning data, human feedback) are assumed essential. Then someone discovers that sufficiently clever analysis of the artefact itself makes those resources unnecessary.

Data-free compression opens the door to a fundamentally different deployment model. Quantization becomes instant, reproducible, and carries zero data governance burden. For organisations deploying AI in regulated environments, that changes everything.